AI is here, but is it a brave new world?

WE live in a world where a computer can beat the world chess champion and a dog robot can not only open doors but has now been taught how to overcome human interference.

The rapid pace at which the realm of Artificial Intelligence, or AI, is developing can feel bewildering at times and, while some people say it could help save humanity, there are others who believe it could destroy us all.

Advertisement

Hide AdAdvertisement

Hide AdAs Professor Stephen Hawking said a couple of years ago, the creation of powerful artificial intelligence will be “either the best, or the worst thing, ever to happen to humanity”.

It’s a subject that Professor Hassan Ugail, Director of the Centre of Visual Computing at the University of Bradford, has been engrossed with for years and when it comes to AI he’s keen to dispel some myths about it.

“Many people are scared of Artificial Intelligence and the potential impact it can have on society, and I think it’s important to discuss why it may not be something we need to fear, but more something that needs to be properly understood,” he says.

AI has actually been around for decades but we’re only just beginning to see the scale of its untapped potential. “In the next 10 or 20 years no matter what business you are in, there will be a component of AI in it.

Advertisement

Hide AdAdvertisement

Hide Ad“So whether it’s banking or journalism, it’s not about if AI will affect you, but how you are going to integrate it into your work.”

Part of the problem is many people don’t necessarily understand what AI is and what can and can’t do. It isn’t about robots (although it can be embedded in one), it’s more to do with trying to create a kind of computer mind that can think like a human using a piece of code.

“These codes may be to do with face recognition or news writing and the idea is you let them learn,” says Prof Ugail. “There’s going to be an era when computer programs can actually learn by themselves and create other computer programs without any human input, and as with every new invention that can bring good and bad things. But the aim of AI is to benefit humanity not to try and harm or destroy it.”

He points out that AI is already an integral part of everyday life – it’s used in voice commands on smartphones and in apps that scan clothes – and goes from the fairly rudimentary to something far more complex.

Advertisement

Hide AdAdvertisement

Hide Ad“People often think that AI has to be some weird or wonderful machine, but that isn’t the case. Something like a spellchecker is a good piece of AI. You might think it isn’t, but it does help you make fewer mistakes.”

In December, AlphaZero, the game-playing AI created by Google sibling DeepMind, beat the world’s best chess-playing computer program, having taught itself how to play in under four hours.

“It was only taught the rules of chess and shown pictures of a chess board. This piece of AI actually learnt by itself, and it could beat a human within 24 hours. But one must bear in mind, this piece of AI can only play chess, it can’t make art or write a book,” says Prof Ugail.

We can now teach AI to be better at some things than we are, but this doesn’t mean it can replace us.

Advertisement

Hide AdAdvertisement

Hide AdIt’s already used by the NHS and in the future is likely to play a crucial role in helping ease the burden on overstretched resources. “If you are a doctor or a pathologist making a diagnosis on cancer, what the AI can do is it knows how to diagnose certain cancers so what it’s doing is telling the doctor what is right and what is wrong.

“It isn’t about replacing the doctor at all. I don’t see any AI replacing humans; it’s about helping us to make better decisions,” he says.

At the University of Bradford, researchers have recently developed an AI tool that has been taught to detect certain types of cancers from images of tissues.

“At the moment doctors need to look carefully at such images before making a decision.

Advertisement

Hide AdAdvertisement

Hide Ad“Our system can be used in hospitals and submitted to the computer which may lead to preliminary tests and further confirmation before it’s given to the doctor.”

In other words it can save doctors and nurses valuable time and help make the NHS more efficient.

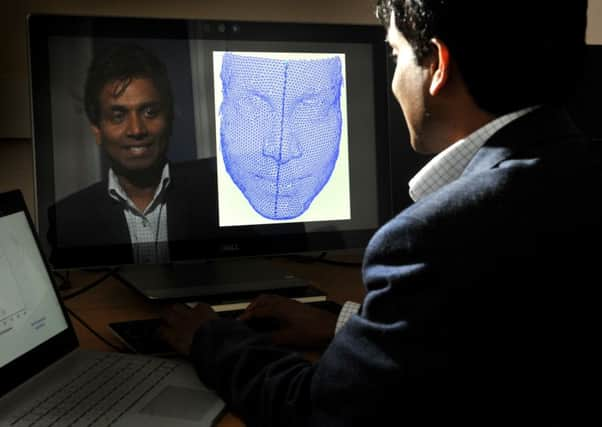

There are new developments happening all the time and Prof Ugail has been involved in the field of face recognition and algorithms. “If you go through passport control, a camera takes a picture of your face and matches it with your passport. That’s quite standard face-matching but what we are looking at is far more complex face recognition problems.

“Say you have a million faces in a database and you have a small part of a face and want to see whether that person exists in this database. That would be a huge task for a human, in fact, it would be almost impossible, but a machine can do that now.”

Advertisement

Hide AdAdvertisement

Hide AdThis technology has far-reaching consequences in helping tackle crime, for instance. “If you have CCTV images of people, they’re often very blurry images, and our AI can be used to find matches quickly – helping to identify potential terrorists or burglars. So it can be an important resource for the police.”

However, there are concerns that as AI programmes become more complex and powerful, they could be hacked or hijacked, with some experts warning about the threat of drones being turned into missiles and fake videos being used to manipulate public opinion.

Prof Ugail is less alarmist but accepts there is a potential risk from AI viruses in the future. “For example, sooner or later we will have driverless cars but they will rely on state of the art image recognition tools (to distinguish between pedestrians and road signs).

“So there may be scenarios where the system could be hacked and that would bring everything to a standstill,” he says.

Advertisement

Hide AdAdvertisement

Hide Ad“There might be a slight danger if the day comes when they can start thinking for themselves, but we are nowhere near that stage, we can’t give them self-consciousness or anything like that.”

Rather than viewing it in dystopian them against us terms, supporters of AI see our relationship as more symbiotic.

“The whole idea behind AI is to make things more efficient and streamlined and that’s why so many people are jumping on the bandwagon,” says Prof Ugail.

So what about the future? “It’s difficult to predict but I think for the next 50 to 100 years humanity will certainly benefit from AI.

Advertisement

Hide AdAdvertisement

Hide Ad“But if you go back 300 or 400 years, would people want to go back now to the way they were living then? Probably not. And when you look at the overall picture I think AI is for the betterment of humanity.”

Battle to prevent tech exploitation

Last month the Malicious Use of Artificial Intelligence report warned that AI is ripe for exploitation by rogue states, criminals and terrorists, and called on those designing AI systems to do more to mitigate possible misuses of their technology.

There is pressure, too, on governments to consider bringing in new laws and speaking at the World Economic Forum in Davos in January, the Prime Minister Theresa May said she wanted the UK to lead the world in deciding how artificial intelligence can be deployed in a safe and ethical manner.

In the same week Google picked France as the base for a new research centre dedicated to exploring how AI can be applied to health and the environment.